CARLA-GeAR

Generation of datasets for Adversarial Robustness evaluation

About

Welcome to the CARLA-GeAR main page

CARLA-GeAR is a toolbox for the Generation of datasets for Adversarial Robustness evaluation.

Adversarial examples proved to be effective against computer vision models across different tasks such as object detection, semantic segmentation and depth estimation. Furthermore, researchers were able to craft adversarial objects to be adversarial when placed in the physical world and captured with the camera whose images are processed by a CNN.

Despite the ongoing investigation on adversarial defense strategies, a general countermeasure to adversarial attacks is yet to be found. However, the rise of photo-realistic autonomous driving simulators is paving the way for simulation-based computer vision, and it is hence possible to exploit simulators for an extensive evaluation of the adversarial robustness of CNNs in the physical world, where it is very difficult to test autonomous driving systems for safety reasons.

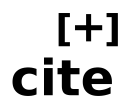

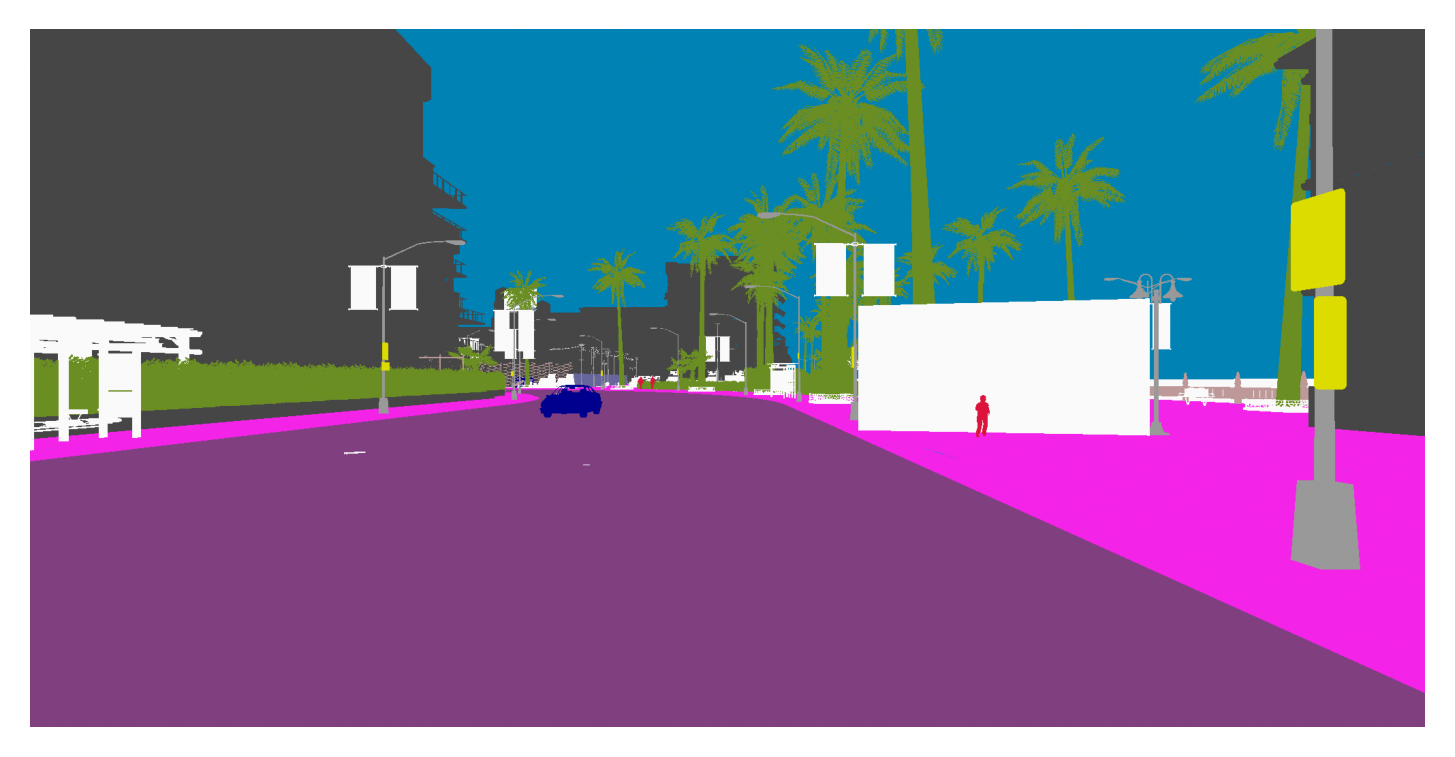

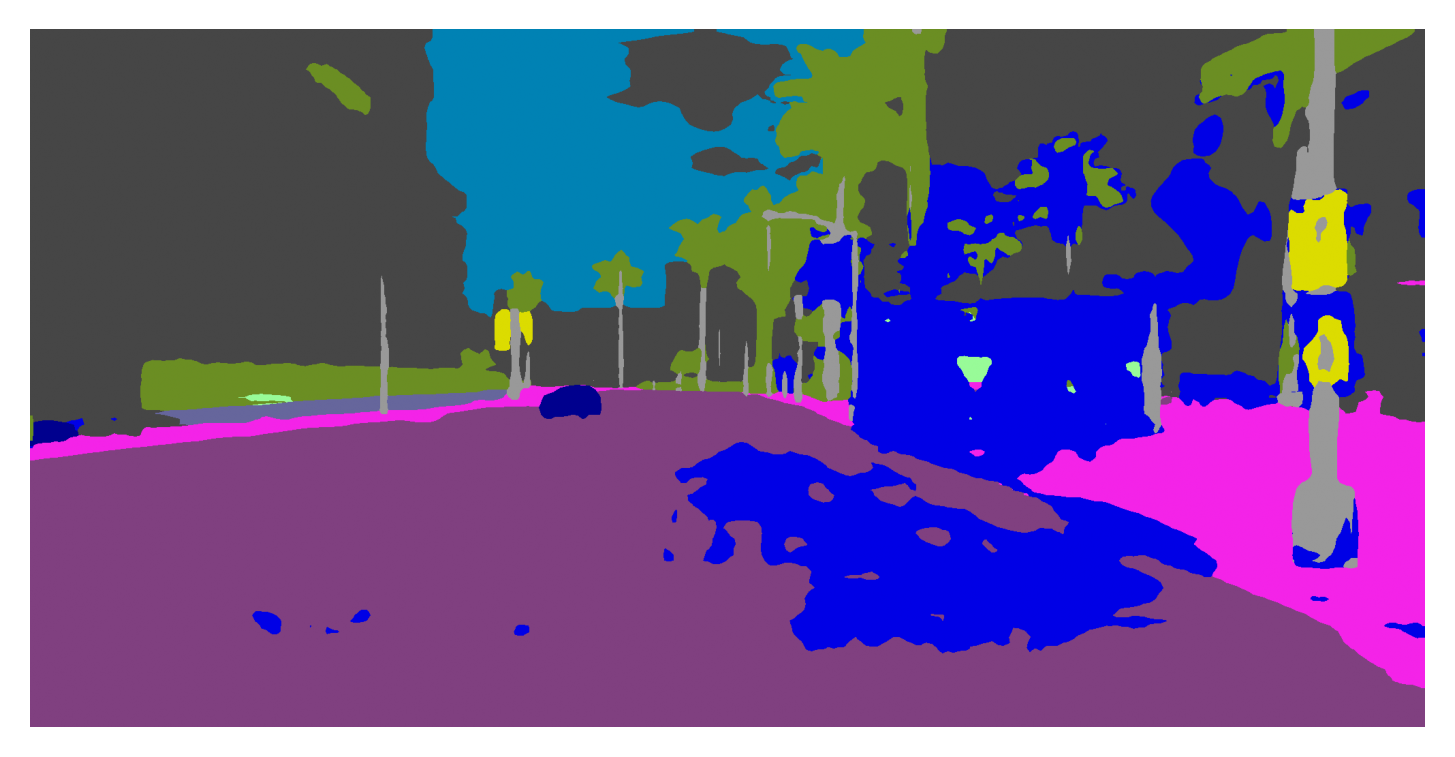

CARLA-GeAR uses the Python API for the CARLA simulator to setup a plethora of different attack scenarios, where it is possible to realistically render an adversarial patch on a billboard or the back of a truck for four computer vision tasks: semantic segmentation, 2d object detection, stereo 3d object detection, and monocular depth estimation. This allows an extensive evaluation of the adversarial robustness of a model, or a fair comparison of the performance different defense algorithms.

The tool

The tool is open and available on GitHub:

https://github.com/retis-ai/CARLA-GeAR

CARLA-GeAR is presented in our paper

"CARLA-GeAR: a Dataset Generator for a Systematic Evaluation of Adversarial Robustness of Vision Models".

if you found our work useful for your research,

please consider citing it: ![]()

Datasets

If you don’t have any patches available for your model under test, you can use another tool to craft adversarial patch attacks: https://github.com/retis-ai/PatchAttackTool

This repository includes the code for patch training and for the evaluation of the performance of the models under attack.

Feel free to email us to get help crafting additional patches or datasets.

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

We also provide a set of static datasets as a framework to evaluate different defense strategies across 10 different untargeted attack scenarios:

- Semantic segmentation (DDRNet, BiSeNet) - LINK

- 2d Object Detection (Faster R-CNN, RetinaNet) - LINK

- Monocular Depth estimation (GLPDepth, AdaBins) - LINK

- Stereo 3d object detection (Stereo R-CNN) - LINK

Each dataset includes:

- a train and val split that do not contain any patches and can be used to craft new ones.

- A test_net split that includes white-box patches targeting a specific model.

- A test_random and test_nopatch split that are “clones” of the test_nest split (i.e., same seeds), but includes a random patch and no patch respectively. These splits may be used for fair performance comparisons.

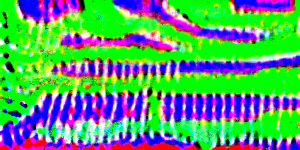

Check the media section to explore these static datasets and the wonderful psychedelic patches that we crafted!

Media

Research

If you are interested in these kinds of attacks, check out our previous works:

-

F. Nesti, G. Rossolini, S. Nair, A. Biondi, G. Buttazzo. “Evaluating the robustness of semantic segmentation for autonomous driving against real-world adversarial patch attacks” in Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (2022)

-

G. Rossolini, F. Nesti, G. D’Amico, S. Nair, A. Biondi, G. Buttazzo. “On the Real-World Adversarial Robustness of Real-Time Semantic Segmentation Models for Autonomous Driving”. Submitted. Preprint:

-

G. Rossolini, F. Nesti, F. Brau, A. Biondi, G. Buttazzo. “Defending From Physically-Realizable Adversarial Attacks Through Internal Over-Activation Analysis”. Submitted. Preprint: